Cross-channel attribution is a hot topic these days. We’ve been asked by many clients recently what they need to know about attribution and how it could be used to help improve their marketing results. To get answers, we went to industry leader (and current client) Visual IQ and sat down with their CMO, Bill Muller. Bill’s responses to the key questions related to attribution can be found below. This is a must-read for anyone new to attribution or for anyone considering investing in a cross-channel attribution platform.

Q: Can you explain for folks new to attribution, how does cross-channel attribution work? What are the main benefits of using a cross-channel attribution platform?

A: Cross-channel attribution, much like any discipline, can vary widely depending on the degree of sophistication and complexity of the platform that you use. It’s like asking, “How much does a car cost?” Well, it depends on whether it’s a Prius or a Ferrari.

The way we perform cross-channel attribution is a methodology called “algorithmic” or model-based attribution, which differs dramatically from rules-based methodologies that tend to be flawed and subjective. Algorithmic attribution works as a platform that ingests marketing performance data from both digital and non-digital sources. In the case of digital or “tagable” sources, we often use the ad server tracking that’s already being used by a client. We also use our own pixel to stitch together the various touchpoints that are involved in a user’s journey to a conversion.

That data is then fed into an attribution engine, which is a series of algorithms and machine-learning technologies that chew through the data and fractionally attribute credit for a conversion across the various touchpoints experienced by a user. Rather than simply looking at the order in which those touchpoints took place, the engine measures all of the individual components that make up those touchpoints; for example, channel, ad size, creative, keyword, or placement.

By doing this across an entire universe of users who are exposed to your marketing efforts, the software can calculate success metrics across all channels to show exactly how much credit each touchpoint and each channel deserves. Almost always, when that calculation gets performed, you get a very different picture of which channels, campaigns, and granular-level tactics are contributing to your overall success.

The main benefits are better decision-making and better allocation of budget. Ultimately what people do with the output of the attribution is reallocate budget to any channels, campaigns, and tactics that they previously undervalued. They then fund those by taking budget away from the channels that they’ve historically overvalued, the losers, and provide it to the winners.

Q: Does the platform tend to work better for certain industries?

A: To determine fit, we tend to look at “business models” more than “industries.” Until recently, attribution had been a direct response-related endeavor, meaning that companies using digital and/or digital combined with offline to produce hard and fast conversions, such as an e-commerce transaction, a lead, or a quote, will best benefit from the software. There are many industries that align with this type of business model.

In terms of attribution, business models that historically have been left out in the cold have been companies that do not have those types of transactions in place. In terms of their objective, attribution has primarily been about generating brand engagement, because they do not have a direct line to their conversion event.

Think about, for example, pharmaceutical companies. You are not buying a drug on their website or buying drugs as a result of seeing their TV advertisement, but there are marketing activities that are causing you to experience some brand engagement. Ultimately, you may be prescribed the drug and purchase it, but there is no linkage between their marketing and your purchase. There are no conclusions to draw.

This business model, as a result, has been difficult for attribution to conquer in the past because there hasn’t been a tie between media stimulation and the eventual consumption of an end product. Until recently.

Q: What kinds of recommendations will an attribution platform make? Are they typically budget related or otherwise? Are they typically real-time, on-going, or one-time recommendations?

A: The recommendations are typically budget-related, as we are talking about spending money on individual tactics: moving budget off of less successful ones, onto more successful ones. They are typically not real-time, but daily, because we can only make recommendations at the pace of which our attribution engine is fed with performance data.

The recommendations do, however, absolutely need to be ongoing. Much like a search campaign, it’s not ‘set it and forget it.’ The environment in which you operate is not a static one. It is constantly based on the marketplace, on what competitors are doing, on econometric factors, on global events, etc. It constantly needs to be adjusted based on the dynamic nature of the marketplace. This is ongoing and not a one-time recommendation.

Q: How drastic will the recommended changes be?

A: The type of recommendations can be as granular as the characteristics of the data that is provided. When a lot of people think of attribution, they think totally about the chronology of the touchpoints that have taken place in relation to the number of conversions. They think, ‘This happened first, this happened second, this happened third, and I really can’t control those things.’

What they often don’t realize is that these touchpoints are made up of various characteristics. If it was a display ad, there is size, placement, offer, and publisher to consider. If it’s the search channel, one can consider if it was paid or organic, keywords, impressions, or clicks. So the recommendations that come out of our application are often things like, “Stop spending $500 a month on this ad, of this size, with this creative, on this publisher, on these days, per week. Now take that money and put into this keyword, on this search engine, with this creative, and this offer, on these days of the week.” We include every characteristic of every touchpoint in the model to find out which has the most impact on a client’s overall success.

The recommendations can also be as dramatic as, “Stop spending on certain placements altogether,” or the opposite. We had a client recently that was going to eliminate spending on one display publisher altogether. When they looked at their attribution results, they recognized that instead of it being their worst publisher, it was the publisher that most contributed to their success. They then tripled the amount of spend on the publisher that they were originally going to eliminate from their marketing mix.

Q: Are there channels (Paid Search, SEO, Offline, etc.) that repeatedly prove to drive more or less value than previously believed?

A: Yes – Many clients are highly invested in paid search, but we’ve found that paid search is one of the channels that tends to be universally overvalued in a last-click methodology.

In other words, most of the world is using a last-click methodology to assign conversion credit. If an individual has touched four different times prior to a conversion, odds are you don’t have a methodology in place that can link those four touchpoints together. You don’t always know that the user had touched four times—All you know is that a person converted as a result of a search and a click on a paid search term.

Attribution allows you to tie together the otherwise unknown factors. If somebody was exposed to impressions of a display ad five times prior to their click on a paid search ad, and it ultimately led to a conversion, we can see that.

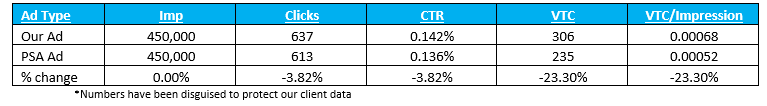

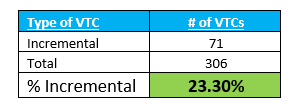

Q: How does the attribution model handle view-through conversions?

A: Our methodology not only ingests touchpoints that resulted in clicks, but it ingests touchpoints where there was only an impression. For example, you do not have to click to be cookied. When a touchpoint is analyzed, we look at all the constituent parts of it—its size, its publisher, its placement.

Using that data, our solution then calculates how much value a “mere” impression had in the grand scheme of things: What was the difference in performance between those people that were not exposed to the ad and eventually converted, compared to those that were exposed to the ad?

Q: Where do you see attribution technology evolving over the next five years? What will we be able to measure and/or optimize better by 2020?

A: As I mentioned previously, until now, attribution has very much been a direct-response technology. Recently, however, Visual IQ released a methodology that allows us to extend our solutions much beyond direct-response business models. Instead of ingesting direct-response conversions, it uses brand engagement touches— first visits to a website, video views, media asset downloads for example — to come up with a common brand engagement score. The attribution product then optimizes or makes recommendations on how to maximize that assigned brand engagement score.

Not only does this allow us to focus on companies that are pure brand engagement, but it also allows us to help the side of the house that has not been able to benefit from attribution in the past. And frankly, at some companies brand spending far outweighs direct response spending.

Q: What makes Visual IQ different from the other cross-channel attribution vendors in the space?

A: Part of it is our legacy, in that we were one of the first attribution vendors in the space, and that we were the first attribution vendor to offer algorithmic attribution.

From the very beginning, we tackled granularity. We let the machine-learning and the mathematical science do the calculations so that the data we receive tells the story. Because we’ve done this since the beginning, we’ve been able to improve the level of sophistication of our product.

Visual IQ’s products are smarter products. We’ve continued to innovate things like attribution branding and offline media attribution. We have a television attribution product. We are consistently offering features, benefits, and values to our clients before our competitors.

We’ve also been working with enterprise-sized clients since the inception of our organization. The largest, most successful brands in marketplace and some of the most demanding marketers in the world are using our products. We’ve developed our products over the past decade based on their needs and demands.

If we can bring in 17 different channels from one of the world’s largest credit card companies, across multiple countries and business units, and provide them with actionable business recommendations that they can act on to generate millions of dollars-worth of media efficiency, then we certainly have the ability to handle 99 percent of the potential businesses out there. Without our legacy and history of innovating, longevity, and continuing to improve our product, we wouldn’t have that capability today.

Q: For those who are interested in learning more about your platform, what’s the best way for them to get in touch with you?

A: If you have any questions surrounding cross-channel attribution, or to want to learn if Visual IQ attribution software is right for your business, please email me at Bill.muller@visualiq.com.

For folks who are trying to better understand us in the attribution space, we have been at the top of the last three Wave Reports done on our marketplace. By talking to Visual IQ, you can rest assured that you are truly talking to the industry leader.

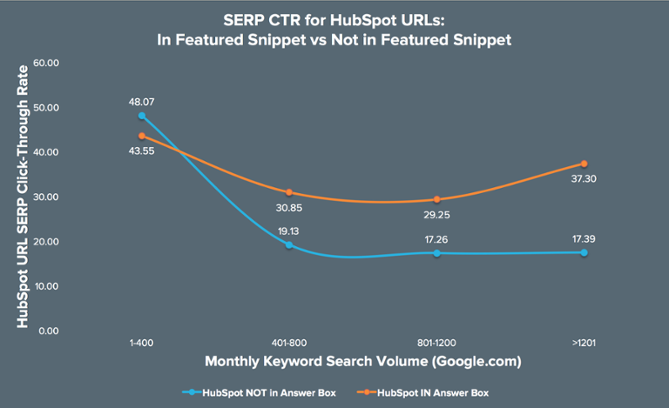

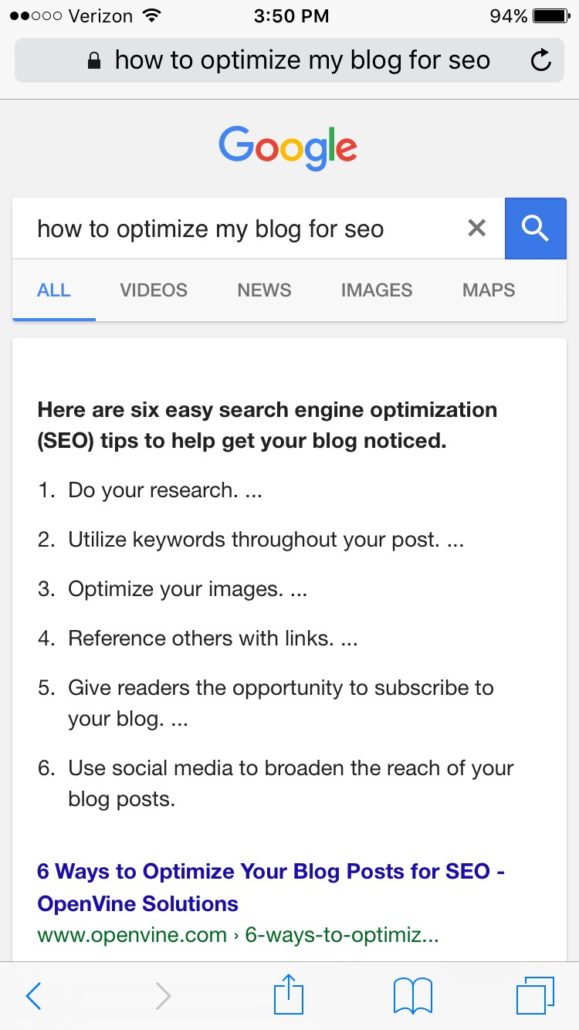

Featured Snippets take up substantial real estate in Google’s SERP. For the mobile user, Featured Snippets can occupy an entire above fold experience. With all the space they consume, Featured Snippets practically scream to a searcher, “I can give you what you need!” or “Click me, I ranked above the rest!” In many cases, a user will respond.

Featured Snippets take up substantial real estate in Google’s SERP. For the mobile user, Featured Snippets can occupy an entire above fold experience. With all the space they consume, Featured Snippets practically scream to a searcher, “I can give you what you need!” or “Click me, I ranked above the rest!” In many cases, a user will respond.